AI coding tools are mandatory at work. So why are interviews still banning them?

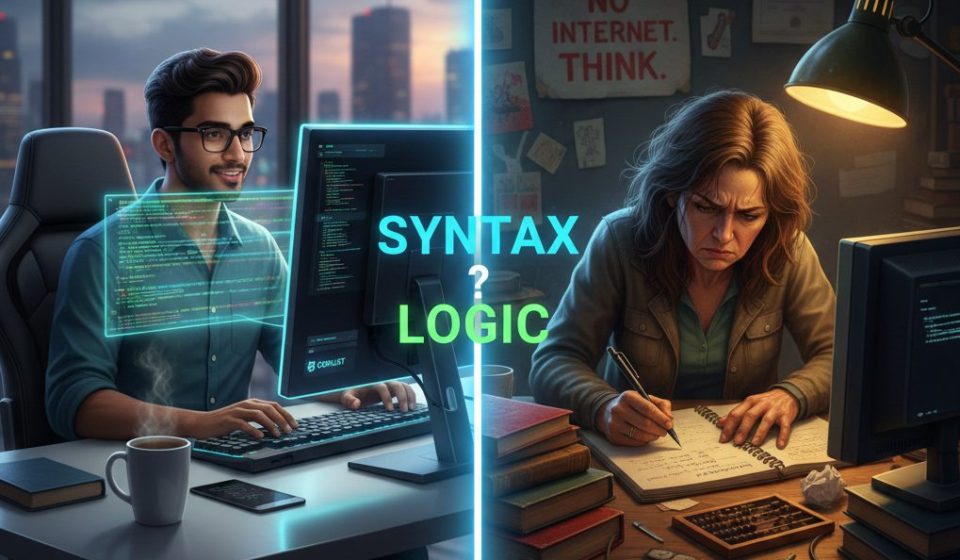

AI at work vs No AI in Interviews

Walk into any major IT hub in India today, and you’ll see that efficiency is the top priority. Companies are investing heavily in tools like Cursor, GitHub Copilot, and other AI-powered IDEs. Developers aren’t just encouraged to use these tools; they’re expected to. In many teams, it’s a requirement. AI now handles documentation, debugging, code review, and many other tasks that used to take hours.

But when it’s time for the interview at that same company, things change.

Suddenly, the tools disappear. No AI. No Google. Just you, a blank notepad, and a LeetCode-style problem that you would never, ever solve that way on the actual job. It is as if you are being hired to drive a car, and the test requires you to prove you can build one from scratch.

I have been a developer for over a decade. I have sat on both sides of this table. And I can tell you that this disconnect is not a minor inconvenience. It is a genuine problem that is causing companies to miss good engineers and causing good engineers to fail interviews they absolutely should be passing.

The syntax trap

Most tech interviews still focus on whether you can write a sorting algorithm on a whiteboard without any mistakes. The idea is that if you know the syntax by heart, you must really understand the concept.

But that assumption stopped being true the moment AI became part of our daily work.

Syntax has become a commodity. Any good AI tool can write a correct Binary Sort in TypeScript in seconds. But AI can’t understand your system’s architecture, choose the right data structure for your needs, or decide when not to use a certain algorithm. That kind of judgment is what companies really need from senior engineers. It’s what sets a true developer apart from someone who just generates code.

So why are we still testing people as if they’re human compilers, instead of focusing on their skills as solution architects?

What the interview is actually measuring

A whiteboard coding round really just shows how someone performs under artificial pressure, without the tools they usually use, on a problem likely taken from a competitive programming site.

That information isn’t useless; it does show how someone thinks under stress. But it tells you very little about how they’ll actually perform at work. More and more, it ends up filtering out the wrong people.

A developer who can’t flatten a nested array from memory in 45 minutes might be the same person who, on the job, would find an elegant, AI-assisted solution in 20 minutes. They might also write the tests, the documentation, and catch the edge case that the original ticket missed.

How interview formats need to change

I’m not saying interviews should be easier; they should be more relevant. Here’s what I think a truly useful technical interview could look like in 2026.

If you want to help your company improve its interview process, there are practical steps you can take. Start small. Bring up the topic in team meetings or share your recent experiences with colleagues and hiring managers. You could suggest trying out one interview round that allows AI tools, or propose adding code review exercises. Even offering to draft a sample question or run a simple trial can start real conversations. The key is to show that change is possible, and it often begins with practical ideas from engineers themselves.

Test how someone reads and critiques code, not just how they write it.

Give candidates an AI-generated block of code and ask them to find logical flaws, explain architectural trade-offs, or describe how they’d optimize it for scale. This is much harder and more meaningful than writing code from scratch. It shows whether the candidate truly understands the code, not just if they remember syntax.

System design over syntax.

Spending 45 minutes discussing a candidate’s past work or tackling a real system design problem gives you far more useful insight than any LeetCode puzzle. In real jobs, a developer’s value comes from connecting services, managing state, handling data flow, and making smart trade-offs. Algorithm questions don’t reveal any of that.

Allow tools. Evaluate judgment.

If a developer can use AI to find the right answer in 5 minutes instead of 50, that’s not cheating; that’s the skill you want. The interview shouldn’t be about finding information, but about applying it well. Let candidates use Google, AI search, and documentation. Then see if they reach the right conclusion, understand the constraints, and make solid decisions.

Why do companies resist changing this

I understand why hiring managers are hesitant to change things. The whiteboard coding interview has been the standard for so long that it feels safe. It’s familiar, standardized, and gives an easy way to compare candidates.

Standardized does not mean accurate. And familiar does not mean useful.

There’s also a real worry that letting candidates use AI in interviews will make it hard to tell who really understands the problem and who’s just good at prompting AI. That’s a valid concern, but it’s a problem with interview design, not a reason to ban AI. A well-designed question that allows AI will still show the difference between someone who knows their stuff and someone who doesn’t. Interviewers just need to ask better questions.

For example, instead of: “Implement a binary tree traversal,” try: “Here is a code snippet that traverses a binary tree. Identify any bugs, describe how you might refactor it for readability or performance, and explain when a different approach would be more suitable in a real-world system.” Or you could present a real bug report with ambiguous symptoms and let the candidate use whatever tools they like to investigate, asking them to narrate their reasoning and next steps as they work. Another option: “Given access to AI tools, ask the candidate to build a minimal service that meets a set of business constraints, then justify the architectural decisions they made and discuss trade-offs.” These scenarios test not just coding ability, but also judgment, communication, and practical problem-solving, even with AI in the room.

The real cost of the disconnect

There’s a real consequence here that companies rarely mention. When you use tests that don’t match the actual job, you end up hiring for the wrong skills. You might pick someone great at memorizing syntax but weak in the architectural thinking your team needs. Or you might miss out on someone who thinks well but struggles with the pressure of a timed coding test.

The best developers I’ve worked with over the past decade weren’t always the ones who could recite algorithms from memory. They were the ones who could look at a complex, broken system and figure out what was wrong. They could make good decisions when things were uncertain and knew when not to build something. That kind of skill doesn’t show up on a LeetCode leaderboard.

A reasonable ask

I’m not saying interviews should be easy. I’m saying they should be honest.

If most of a company’s daily work is AI-assisted, the interview should reflect that. Test for judgment, architectural thinking, and the ability to improve existing code. Let candidates use the same tools they’ll use on the job, and then see if they use them well.

Syntax is cheap. Logic is valuable. The interview should focus on what really matters.

What do you think? Have you faced this disconnect in your own interviews, either as a candidate or an interviewer? I would genuinely like to know how other developers are navigating this in the comments below.

If this resonated, you might also find these useful: Why TypeScript is a Literal Life Saver and For Professional Technical Writers: Part 1.

Disclaimer: This post may contain affiliate links. If you click and buy, we may receive a small commission at no extra cost to you. Read our full disclosure here.